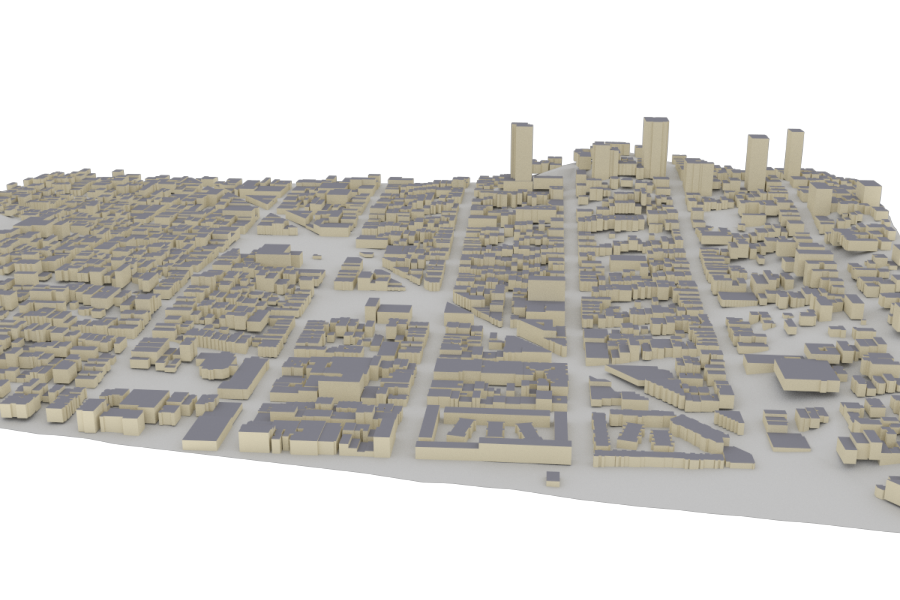

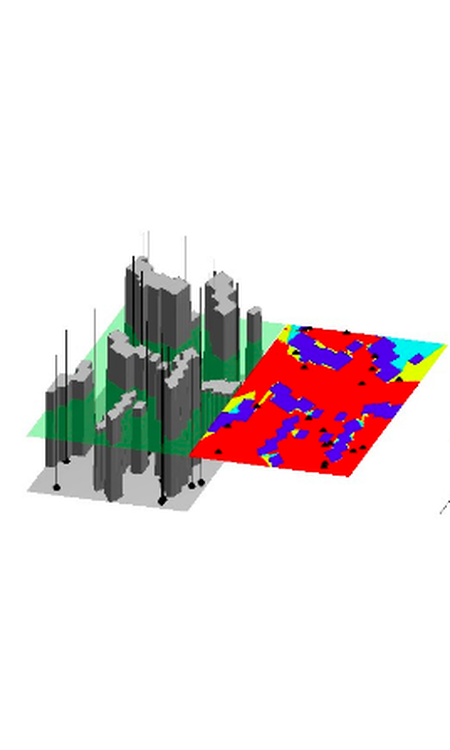

Optimal Transmitter Placement in Realistic Urban Environments

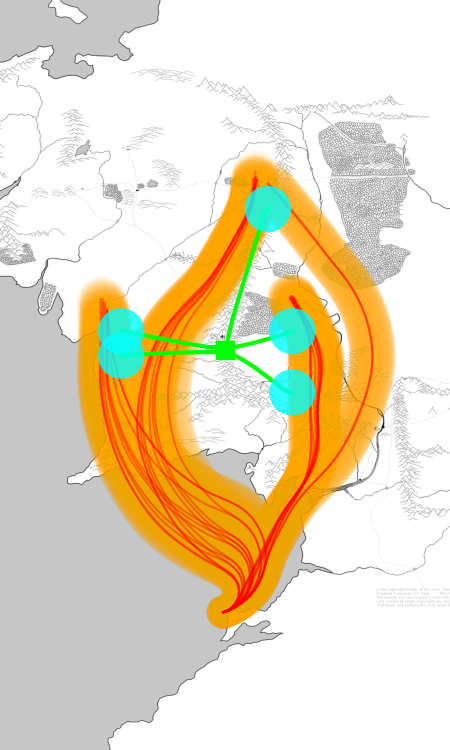

We develop a mathematically rigorous framework for optimally placing wireless transmitters in 3D urban environments, combining physics-accurate ray tracing with submodular optimization theory. Our algorithm, IA-SPA, comes with provable approximation guarantees and outperforms real-world AT&T and T-Mobile deployments by up to 215% in mean data rate.